SEO check"An SEO check involves analyzing a websites performance to identify strengths and weaknesses.

SEO companies in Australia"SEO companies in Australia offer comprehensive services designed to improve website rankings and increase organic traffic. Best SEO Sydney Agency. By combining technical expertise, creative content strategies, and data analysis, these companies help businesses achieve sustainable growth and build a strong online presence."

SEO company in Sydney"A reputable SEO company in Sydney offers businesses a full suite of optimization services, including keyword research, on-page optimization, and backlink strategies. By delivering customized solutions, these companies help clients achieve higher rankings and long-term success."

SEO company in Sydney"A reputable SEO company in Sydney offers businesses a full suite of optimization services, including keyword research, on-page optimization, and backlink strategies. By delivering customized solutions, these companies help clients achieve higher rankings and long-term success."

SEO company Sydney"An SEO company in Sydney provides businesses with customized optimization solutions that improve search rankings and drive traffic. By offering services like keyword research, content creation, technical audits, and link building, these companies help clients achieve their digital marketing goals."

SEO consultant"A professional SEO consultant provides businesses with the expertise needed to improve search rankings and increase organic traffic. By conducting detailed audits, identifying keyword opportunities, and implementing tailored strategies, these consultants deliver measurable improvements."

Best SEO Audit Services.SEO consultant Sydney"A skilled SEO consultant in Sydney provides businesses with personalized guidance and expertise to improve their search rankings. SEO Packages Sydney . By conducting in-depth audits, identifying keyword opportunities, and implementing targeted strategies, these consultants help clients achieve long-term success in their digital marketing efforts."

SEO consultant Sydney"A skilled SEO consultant in Sydney provides businesses with personalized guidance and expertise to improve their search rankings. By conducting in-depth audits, identifying keyword opportunities, and implementing targeted strategies, these consultants help clients achieve long-term success in their digital marketing efforts."

SEO consultants Sydney"Sydney-based SEO consultants provide expert guidance to improve search rankings, increase organic traffic, and drive conversions. With a focus on data-driven strategies and continuous improvement, these consultants help businesses achieve sustainable growth."

SEO content marketing"SEO content marketing focuses on creating high-quality, keyword-optimized content that drives organic traffic. By combining SEO best practices with engaging storytelling, businesses can improve search rankings, attract more visitors, and convert readers into customers."

SEO conversion optimization"SEO conversion optimization involves creating content and calls-to-action that guide visitors toward completing desired actions. By combining effective SEO techniques with user-friendly design and clear messaging, businesses can increase conversions and maximize the return on their optimization efforts."

SEO copywriting"SEO copywriting combines engaging content with strategic keyword usage. comprehensive SEO Services services.

SEO cost analysis"SEO cost analysis helps businesses understand the financial investment required for effective optimization. By evaluating tools, services, and time commitments, companies can make informed decisions and ensure they allocate resources efficiently for the best results."

SEO expert"An SEO expert provides businesses with the guidance and strategies needed to improve their search rankings. By analyzing data, optimizing website elements, and implementing proven techniques, these experts help clients achieve sustainable growth and a strong online presence."

SEO expert Sydney"A seasoned SEO expert in Sydney helps businesses navigate the complexities of search engine optimization. By analyzing data, refining strategies, and staying current with algorithm changes, these experts deliver measurable improvements in search rankings and website performance."

SEO expert Sydney"A seasoned SEO expert in Sydney helps businesses navigate the complexities of search engine optimization. By analyzing data, refining strategies, and staying current with algorithm changes, these experts deliver measurable improvements in search rankings and website performance."

SEO experts"SEO experts specialize in improving website performance, increasing organic traffic, and enhancing search rankings. By leveraging advanced techniques, data-driven strategies, and industry best practices, these experts help businesses achieve measurable and lasting results."

SEO experts Sydney"SEO experts in Sydney combine technical knowledge, creative strategies, and industry insights to deliver outstanding results. With a focus on data-driven decision-making and continuous improvement, these experts help businesses achieve and maintain top search rankings."

SEO experts Sydney"SEO experts in Sydney combine technical knowledge, creative strategies, and industry insights to deliver outstanding results. With a focus on data-driven decision-making and continuous improvement, these experts help businesses achieve and maintain top search rankings."

SEO for blogs"SEO for blogs focuses on optimizing individual blog posts with relevant keywords, compelling meta descriptions, and clear headings. By creating well-structured, valuable content, bloggers can attract more readers, rank higher in search results, and build a loyal audience."

SEO for ecommerce"SEO for ecommerce focuses on optimizing product pages, category pages, and site structure to increase visibility and drive sales.

Web design encompasses many different skills and disciplines in the production and maintenance of websites. The different areas of web design include web graphic design; user interface design (UI design); authoring, including standardised code and proprietary software; user experience design (UX design); and search engine optimization. Often many individuals will work in teams covering different aspects of the design process, although some designers will cover them all.[1] The term "web design" is normally used to describe the design process relating to the front-end (client side) design of a website including writing markup. Web design partially overlaps web engineering in the broader scope of web development. Web designers are expected to have an awareness of usability and be up to date with web accessibility guidelines.

Although web design has a fairly recent history, it can be linked to other areas such as graphic design, user experience, and multimedia arts, but is more aptly seen from a technological standpoint. It has become a large part of people's everyday lives. It is hard to imagine the Internet without animated graphics, different styles of typography, backgrounds, videos and music. The web was announced on August 6, 1991; in November 1992, CERN was the first website to go live on the World Wide Web. During this period, websites were structured by using the <table> tag which created numbers on the website. Eventually, web designers were able to find their way around it to create more structures and formats. In early history, the structure of the websites was fragile and hard to contain, so it became very difficult to use them. In November 1993, ALIWEB was the first ever search engine to be created (Archie Like Indexing for the WEB).[2]

In 1989, whilst working at CERN in Switzerland, British scientist Tim Berners-Lee proposed to create a global hypertext project, which later became known as the World Wide Web. From 1991 to 1993 the World Wide Web was born. Text-only HTML pages could be viewed using a simple line-mode web browser.[3] In 1993 Marc Andreessen and Eric Bina, created the Mosaic browser. At the time there were multiple browsers, however the majority of them were Unix-based and naturally text-heavy. There had been no integrated approach to graphic design elements such as images or sounds. The Mosaic browser broke this mould.[4] The W3C was created in October 1994 to "lead the World Wide Web to its full potential by developing common protocols that promote its evolution and ensure its interoperability."[5] This discouraged any one company from monopolizing a proprietary browser and programming language, which could have altered the effect of the World Wide Web as a whole. The W3C continues to set standards, which can today be seen with JavaScript and other languages. In 1994 Andreessen formed Mosaic Communications Corp. that later became known as Netscape Communications, the Netscape 0.9 browser. Netscape created its HTML tags without regard to the traditional standards process. For example, Netscape 1.1 included tags for changing background colours and formatting text with tables on web pages. From 1996 to 1999 the browser wars began, as Microsoft and Netscape fought for ultimate browser dominance. During this time there were many new technologies in the field, notably Cascading Style Sheets, JavaScript, and Dynamic HTML. On the whole, the browser competition did lead to many positive creations and helped web design evolve at a rapid pace.[6]

In 1996, Microsoft released its first competitive browser, which was complete with its features and HTML tags. It was also the first browser to support style sheets, which at the time was seen as an obscure authoring technique and is today an important aspect of web design.[6] The HTML markup for tables was originally intended for displaying tabular data. However, designers quickly realized the potential of using HTML tables for creating complex, multi-column layouts that were otherwise not possible. At this time, as design and good aesthetics seemed to take precedence over good markup structure, little attention was paid to semantics and web accessibility. HTML sites were limited in their design options, even more so with earlier versions of HTML. To create complex designs, many web designers had to use complicated table structures or even use blank spacer .GIF images to stop empty table cells from collapsing.[7] CSS was introduced in December 1996 by the W3C to support presentation and layout. This allowed HTML code to be semantic rather than both semantic and presentational and improved web accessibility, see tableless web design.

In 1996, Flash (originally known as FutureSplash) was developed. At the time, the Flash content development tool was relatively simple compared to now, using basic layout and drawing tools, a limited precursor to ActionScript, and a timeline, but it enabled web designers to go beyond the point of HTML, animated GIFs and JavaScript. However, because Flash required a plug-in, many web developers avoided using it for fear of limiting their market share due to lack of compatibility. Instead, designers reverted to GIF animations (if they did not forego using motion graphics altogether) and JavaScript for widgets. But the benefits of Flash made it popular enough among specific target markets to eventually work its way to the vast majority of browsers, and powerful enough to be used to develop entire sites.[7]

In 1998, Netscape released Netscape Communicator code under an open-source licence, enabling thousands of developers to participate in improving the software. However, these developers decided to start a standard for the web from scratch, which guided the development of the open-source browser and soon expanded to a complete application platform.[6] The Web Standards Project was formed and promoted browser compliance with HTML and CSS standards. Programs like Acid1, Acid2, and Acid3 were created in order to test browsers for compliance with web standards. In 2000, Internet Explorer was released for Mac, which was the first browser that fully supported HTML 4.01 and CSS 1. It was also the first browser to fully support the PNG image format.[6] By 2001, after a campaign by Microsoft to popularize Internet Explorer, Internet Explorer had reached 96% of web browser usage share, which signified the end of the first browser wars as Internet Explorer had no real competition.[8]

Since the start of the 21st century, the web has become more and more integrated into people's lives. As this has happened the technology of the web has also moved on. There have also been significant changes in the way people use and access the web, and this has changed how sites are designed.

Since the end of the browsers wars[when?] new browsers have been released. Many of these are open source, meaning that they tend to have faster development and are more supportive of new standards. The new options are considered by many[weasel words] to be better than Microsoft's Internet Explorer.

The W3C has released new standards for HTML (HTML5) and CSS (CSS3), as well as new JavaScript APIs, each as a new but individual standard.[when?] While the term HTML5 is only used to refer to the new version of HTML and some of the JavaScript APIs, it has become common to use it to refer to the entire suite of new standards (HTML5, CSS3 and JavaScript).

With the advancements in 3G and LTE internet coverage, a significant portion of website traffic shifted to mobile devices. This shift influenced the web design industry, steering it towards a minimalist, lighter, and more simplistic style. The "mobile first" approach emerged as a result, emphasizing the creation of website designs that prioritize mobile-oriented layouts first, before adapting them to larger screen dimensions.

Web designers use a variety of different tools depending on what part of the production process they are involved in. These tools are updated over time by newer standards and software but the principles behind them remain the same. Web designers use both vector and raster graphics editors to create web-formatted imagery or design prototypes. A website can be created using WYSIWYG website builder software or a content management system, or the individual web pages can be hand-coded in just the same manner as the first web pages were created. Other tools web designers might use include markup validators[9] and other testing tools for usability and accessibility to ensure their websites meet web accessibility guidelines.[10]

One popular tool in web design is UX Design, a type of art that designs products to perform an accurate user background. UX design is very deep. UX is more than the web, it is very independent, and its fundamentals can be applied to many other browsers or apps. Web design is mostly based on web-based things. UX can overlap both web design and design. UX design mostly focuses on products that are less web-based.[11]

Marketing and communication design on a website may identify what works for its target market. This can be an age group or particular strand of culture; thus the designer may understand the trends of its audience. Designers may also understand the type of website they are designing, meaning, for example, that (B2B) business-to-business website design considerations might differ greatly from a consumer-targeted website such as a retail or entertainment website. Careful consideration might be made to ensure that the aesthetics or overall design of a site do not clash with the clarity and accuracy of the content or the ease of web navigation,[12] especially on a B2B website. Designers may also consider the reputation of the owner or business the site is representing to make sure they are portrayed favorably. Web designers normally oversee all the websites that are made on how they work or operate on things. They constantly are updating and changing everything on websites behind the scenes. All the elements they do are text, photos, graphics, and layout of the web. Before beginning work on a website, web designers normally set an appointment with their clients to discuss layout, colour, graphics, and design. Web designers spend the majority of their time designing websites and making sure the speed is right. Web designers typically engage in testing and working, marketing, and communicating with other designers about laying out the websites and finding the right elements for the websites.[13]

User understanding of the content of a website often depends on user understanding of how the website works. This is part of the user experience design. User experience is related to layout, clear instructions, and labeling on a website. How well a user understands how they can interact on a site may also depend on the interactive design of the site. If a user perceives the usefulness of the website, they are more likely to continue using it. Users who are skilled and well versed in website use may find a more distinctive, yet less intuitive or less user-friendly website interface useful nonetheless. However, users with less experience are less likely to see the advantages or usefulness of a less intuitive website interface. This drives the trend for a more universal user experience and ease of access to accommodate as many users as possible regardless of user skill.[14] Much of the user experience design and interactive design are considered in the user interface design.

Advanced interactive functions may require plug-ins if not advanced coding language skills. Choosing whether or not to use interactivity that requires plug-ins is a critical decision in user experience design. If the plug-in doesn't come pre-installed with most browsers, there's a risk that the user will have neither the know-how nor the patience to install a plug-in just to access the content. If the function requires advanced coding language skills, it may be too costly in either time or money to code compared to the amount of enhancement the function will add to the user experience. There's also a risk that advanced interactivity may be incompatible with older browsers or hardware configurations. Publishing a function that doesn't work reliably is potentially worse for the user experience than making no attempt. It depends on the target audience if it's likely to be needed or worth any risks.

Progressive enhancement is a strategy in web design that puts emphasis on web content first, allowing everyone to access the basic content and functionality of a web page, whilst users with additional browser features or faster Internet access receive the enhanced version instead.

In practice, this means serving content through HTML and applying styling and animation through CSS to the technically possible extent, then applying further enhancements through JavaScript. Pages' text is loaded immediately through the HTML source code rather than having to wait for JavaScript to initiate and load the content subsequently, which allows content to be readable with minimum loading time and bandwidth, and through text-based browsers, and maximizes backwards compatibility.[15]

As an example, MediaWiki-based sites including Wikipedia use progressive enhancement, as they remain usable while JavaScript and even CSS is deactivated, as pages' content is included in the page's HTML source code, whereas counter-example Everipedia relies on JavaScript to load pages' content subsequently; a blank page appears with JavaScript deactivated.

Part of the user interface design is affected by the quality of the page layout. For example, a designer may consider whether the site's page layout should remain consistent on different pages when designing the layout. Page pixel width may also be considered vital for aligning objects in the layout design. The most popular fixed-width websites generally have the same set width to match the current most popular browser window, at the current most popular screen resolution, on the current most popular monitor size. Most pages are also center-aligned for concerns of aesthetics on larger screens.

Fluid layouts increased in popularity around 2000 to allow the browser to make user-specific layout adjustments to fluid layouts based on the details of the reader's screen (window size, font size relative to window, etc.). They grew as an alternative to HTML-table-based layouts and grid-based design in both page layout design principles and in coding technique but were very slow to be adopted.[note 1] This was due to considerations of screen reading devices and varying windows sizes which designers have no control over. Accordingly, a design may be broken down into units (sidebars, content blocks, embedded advertising areas, navigation areas) that are sent to the browser and which will be fitted into the display window by the browser, as best it can. Although such a display may often change the relative position of major content units, sidebars may be displaced below body text rather than to the side of it. This is a more flexible display than a hard-coded grid-based layout that doesn't fit the device window. In particular, the relative position of content blocks may change while leaving the content within the block unaffected. This also minimizes the user's need to horizontally scroll the page.

Responsive web design is a newer approach, based on CSS3, and a deeper level of per-device specification within the page's style sheet through an enhanced use of the CSS @media rule. In March 2018 Google announced they would be rolling out mobile-first indexing.[16] Sites using responsive design are well placed to ensure they meet this new approach.

Web designers may choose to limit the variety of website typefaces to only a few which are of a similar style, instead of using a wide range of typefaces or type styles. Most browsers recognize a specific number of safe fonts, which designers mainly use in order to avoid complications.

Font downloading was later included in the CSS3 fonts module and has since been implemented in Safari 3.1, Opera 10, and Mozilla Firefox 3.5. This has subsequently increased interest in web typography, as well as the usage of font downloading.

Most site layouts incorporate negative space to break the text up into paragraphs and also avoid center-aligned text.[17]

The page layout and user interface may also be affected by the use of motion graphics. The choice of whether or not to use motion graphics may depend on the target market for the website. Motion graphics may be expected or at least better received with an entertainment-oriented website. However, a website target audience with a more serious or formal interest (such as business, community, or government) might find animations unnecessary and distracting if only for entertainment or decoration purposes. This doesn't mean that more serious content couldn't be enhanced with animated or video presentations that is relevant to the content. In either case, motion graphic design may make the difference between more effective visuals or distracting visuals.

Motion graphics that are not initiated by the site visitor can produce accessibility issues. The World Wide Web consortium accessibility standards require that site visitors be able to disable the animations.[18]

Website designers may consider it to be good practice to conform to standards. This is usually done via a description specifying what the element is doing. Failure to conform to standards may not make a website unusable or error-prone, but standards can relate to the correct layout of pages for readability as well as making sure coded elements are closed appropriately. This includes errors in code, a more organized layout for code, and making sure IDs and classes are identified properly. Poorly coded pages are sometimes colloquially called tag soup. Validating via W3C[9] can only be done when a correct DOCTYPE declaration is made, which is used to highlight errors in code. The system identifies the errors and areas that do not conform to web design standards. This information can then be corrected by the user.[19]

There are two ways websites are generated: statically or dynamically.

A static website stores a unique file for every page of a static website. Each time that page is requested, the same content is returned. This content is created once, during the design of the website. It is usually manually authored, although some sites use an automated creation process, similar to a dynamic website, whose results are stored long-term as completed pages. These automatically created static sites became more popular around 2015, with generators such as Jekyll and Adobe Muse.[20]

The benefits of a static website are that they were simpler to host, as their server only needed to serve static content, not execute server-side scripts. This required less server administration and had less chance of exposing security holes. They could also serve pages more quickly, on low-cost server hardware. This advantage became less important as cheap web hosting expanded to also offer dynamic features, and virtual servers offered high performance for short intervals at low cost.

Almost all websites have some static content, as supporting assets such as images and style sheets are usually static, even on a website with highly dynamic pages.

Dynamic websites are generated on the fly and use server-side technology to generate web pages. They typically extract their content from one or more back-end databases: some are database queries across a relational database to query a catalog or to summarise numeric information, and others may use a document database such as MongoDB or NoSQL to store larger units of content, such as blog posts or wiki articles.

In the design process, dynamic pages are often mocked-up or wireframed using static pages. The skillset needed to develop dynamic web pages is much broader than for a static page, involving server-side and database coding as well as client-side interface design. Even medium-sized dynamic projects are thus almost always a team effort.

When dynamic web pages first developed, they were typically coded directly in languages such as Perl, PHP or ASP. Some of these, notably PHP and ASP, used a 'template' approach where a server-side page resembled the structure of the completed client-side page, and data was inserted into places defined by 'tags'. This was a quicker means of development than coding in a purely procedural coding language such as Perl.

Both of these approaches have now been supplanted for many websites by higher-level application-focused tools such as content management systems. These build on top of general-purpose coding platforms and assume that a website exists to offer content according to one of several well-recognised models, such as a time-sequenced blog, a thematic magazine or news site, a wiki, or a user forum. These tools make the implementation of such a site very easy, and a purely organizational and design-based task, without requiring any coding.

Editing the content itself (as well as the template page) can be done both by means of the site itself and with the use of third-party software. The ability to edit all pages is provided only to a specific category of users (for example, administrators, or registered users). In some cases, anonymous users are allowed to edit certain web content, which is less frequent (for example, on forums - adding messages). An example of a site with an anonymous change is Wikipedia.

Usability experts, including Jakob Nielsen and Kyle Soucy, have often emphasised homepage design for website success and asserted that the homepage is the most important page on a website.[21] Nielsen, Jakob; Tahir, Marie (October 2001), Homepage Usability: 50 Websites Deconstructed, New Riders Publishing, ISBN 978-0-7357-1102-0[22][23] However practitioners into the 2000s were starting to find that a growing number of website traffic was bypassing the homepage, going directly to internal content pages through search engines, e-newsletters and RSS feeds.[24] This led many practitioners to argue that homepages are less important than most people think.[25][26][27][28] Jared Spool argued in 2007 that a site's homepage was actually the least important page on a website.[29]

In 2012 and 2013, carousels (also called 'sliders' and 'rotating banners') have become an extremely popular design element on homepages, often used to showcase featured or recent content in a confined space.[30] Many practitioners argue that carousels are an ineffective design element and hurt a website's search engine optimisation and usability.[30][31][32]

There are two primary jobs involved in creating a website: the web designer and web developer, who often work closely together on a website.[33] The web designers are responsible for the visual aspect, which includes the layout, colouring, and typography of a web page. Web designers will also have a working knowledge of markup languages such as HTML and CSS, although the extent of their knowledge will differ from one web designer to another. Particularly in smaller organizations, one person will need the necessary skills for designing and programming the full web page, while larger organizations may have a web designer responsible for the visual aspect alone.

Further jobs which may become involved in the creation of a website include:

Chat GPT and other AI models are being used to write and code websites making it faster and easier to create websites. There are still discussions about the ethical implications on using artificial intelligence for design as the world becomes more familiar with using AI for time-consuming tasks used in design processes.[34]

<table>-based markup and spacer .GIF imagescite web: CS1 maint: numeric names: authors list (link)cite web: CS1 maint: numeric names: authors list (link)

|

|

This article needs to be updated. (December 2024)

|

|

|

This article is written like a personal reflection, personal essay, or argumentative essay that states a Wikipedia editor's personal feelings or presents an original argument about a topic. (January 2025)

|

|

|

This article has multiple issues. Please help improve it or discuss these issues on the talk page. (Learn how and when to remove these messages)

|

| Part of a series on |

| Internet marketing |

|---|

| Search engine marketing |

| Display advertising |

| Affiliate marketing |

| Mobile advertising |

Search engine optimization (SEO) is the process of improving the quality and quantity of website traffic to a website or a web page from search engines.[1][2] SEO targets unpaid search traffic (usually referred to as "organic" results) rather than direct traffic, referral traffic, social media traffic, or paid traffic.

Unpaid search engine traffic may originate from a variety of kinds of searches, including image search, video search, academic search,[3] news search, and industry-specific vertical search engines.

As an Internet marketing strategy, SEO considers how search engines work, the computer-programmed algorithms that dictate search engine results, what people search for, the actual search queries or keywords typed into search engines, and which search engines are preferred by a target audience. SEO is performed because a website will receive more visitors from a search engine when websites rank higher within a search engine results page (SERP), with the aim of either converting the visitors or building brand awareness.[4]

Webmasters and content providers began optimizing websites for search engines in the mid-1990s, as the first search engines were cataloging the early Web. Initially, webmasters submitted the address of a page, or URL to the various search engines, which would send a web crawler to crawl that page, extract links to other pages from it, and return information found on the page to be indexed.[5]

According to a 2004 article by former industry analyst and current Google employee Danny Sullivan, the phrase "search engine optimization" probably came into use in 1997. Sullivan credits SEO practitioner Bruce Clay as one of the first people to popularize the term.[6]

Early versions of search algorithms relied on webmaster-provided information such as the keyword meta tag or index files in engines like ALIWEB. Meta tags provide a guide to each page's content. Using metadata to index pages was found to be less than reliable, however, because the webmaster's choice of keywords in the meta tag could potentially be an inaccurate representation of the site's actual content. Flawed data in meta tags, such as those that were inaccurate or incomplete, created the potential for pages to be mischaracterized in irrelevant searches.[7][dubious – discuss] Web content providers also manipulated attributes within the HTML source of a page in an attempt to rank well in search engines.[8] By 1997, search engine designers recognized that webmasters were making efforts to rank in search engines and that some webmasters were manipulating their rankings in search results by stuffing pages with excessive or irrelevant keywords. Early search engines, such as Altavista and Infoseek, adjusted their algorithms to prevent webmasters from manipulating rankings.[9]

By heavily relying on factors such as keyword density, which were exclusively within a webmaster's control, early search engines suffered from abuse and ranking manipulation. To provide better results to their users, search engines had to adapt to ensure their results pages showed the most relevant search results, rather than unrelated pages stuffed with numerous keywords by unscrupulous webmasters. This meant moving away from heavy reliance on term density to a more holistic process for scoring semantic signals.[10]

Search engines responded by developing more complex ranking algorithms, taking into account additional factors that were more difficult for webmasters to manipulate.[citation needed]

Some search engines have also reached out to the SEO industry and are frequent sponsors and guests at SEO conferences, webchats, and seminars. Major search engines provide information and guidelines to help with website optimization.[11][12] Google has a Sitemaps program to help webmasters learn if Google is having any problems indexing their website and also provides data on Google traffic to the website.[13] Bing Webmaster Tools provides a way for webmasters to submit a sitemap and web feeds, allows users to determine the "crawl rate", and track the web pages index status.

In 2015, it was reported that Google was developing and promoting mobile search as a key feature within future products. In response, many brands began to take a different approach to their Internet marketing strategies.[14]

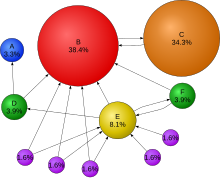

In 1998, two graduate students at Stanford University, Larry Page and Sergey Brin, developed "Backrub", a search engine that relied on a mathematical algorithm to rate the prominence of web pages. The number calculated by the algorithm, PageRank, is a function of the quantity and strength of inbound links.[15] PageRank estimates the likelihood that a given page will be reached by a web user who randomly surfs the web and follows links from one page to another. In effect, this means that some links are stronger than others, as a higher PageRank page is more likely to be reached by the random web surfer.

Page and Brin founded Google in 1998.[16] Google attracted a loyal following among the growing number of Internet users, who liked its simple design.[17] Off-page factors (such as PageRank and hyperlink analysis) were considered as well as on-page factors (such as keyword frequency, meta tags, headings, links and site structure) to enable Google to avoid the kind of manipulation seen in search engines that only considered on-page factors for their rankings. Although PageRank was more difficult to game, webmasters had already developed link-building tools and schemes to influence the Inktomi search engine, and these methods proved similarly applicable to gaming PageRank. Many sites focus on exchanging, buying, and selling links, often on a massive scale. Some of these schemes involved the creation of thousands of sites for the sole purpose of link spamming.[18]

By 2004, search engines had incorporated a wide range of undisclosed factors in their ranking algorithms to reduce the impact of link manipulation.[19] The leading search engines, Google, Bing, and Yahoo, do not disclose the algorithms they use to rank pages. Some SEO practitioners have studied different approaches to search engine optimization and have shared their personal opinions.[20] Patents related to search engines can provide information to better understand search engines.[21] In 2005, Google began personalizing search results for each user. Depending on their history of previous searches, Google crafted results for logged in users.[22]

In 2007, Google announced a campaign against paid links that transfer PageRank.[23] On June 15, 2009, Google disclosed that they had taken measures to mitigate the effects of PageRank sculpting by use of the nofollow attribute on links. Matt Cutts, a well-known software engineer at Google, announced that Google Bot would no longer treat any no follow links, in the same way, to prevent SEO service providers from using nofollow for PageRank sculpting.[24] As a result of this change, the usage of nofollow led to evaporation of PageRank. In order to avoid the above, SEO engineers developed alternative techniques that replace nofollowed tags with obfuscated JavaScript and thus permit PageRank sculpting. Additionally, several solutions have been suggested that include the usage of iframes, Flash, and JavaScript.[25]

In December 2009, Google announced it would be using the web search history of all its users in order to populate search results.[26] On June 8, 2010 a new web indexing system called Google Caffeine was announced. Designed to allow users to find news results, forum posts, and other content much sooner after publishing than before, Google Caffeine was a change to the way Google updated its index in order to make things show up quicker on Google than before. According to Carrie Grimes, the software engineer who announced Caffeine for Google, "Caffeine provides 50 percent fresher results for web searches than our last index..."[27] Google Instant, real-time-search, was introduced in late 2010 in an attempt to make search results more timely and relevant. Historically site administrators have spent months or even years optimizing a website to increase search rankings. With the growth in popularity of social media sites and blogs, the leading engines made changes to their algorithms to allow fresh content to rank quickly within the search results.[28]

In February 2011, Google announced the Panda update, which penalizes websites containing content duplicated from other websites and sources. Historically websites have copied content from one another and benefited in search engine rankings by engaging in this practice. However, Google implemented a new system that punishes sites whose content is not unique.[29] The 2012 Google Penguin attempted to penalize websites that used manipulative techniques to improve their rankings on the search engine.[30] Although Google Penguin has been presented as an algorithm aimed at fighting web spam, it really focuses on spammy links[31] by gauging the quality of the sites the links are coming from. The 2013 Google Hummingbird update featured an algorithm change designed to improve Google's natural language processing and semantic understanding of web pages. Hummingbird's language processing system falls under the newly recognized term of "conversational search", where the system pays more attention to each word in the query in order to better match the pages to the meaning of the query rather than a few words.[32] With regards to the changes made to search engine optimization, for content publishers and writers, Hummingbird is intended to resolve issues by getting rid of irrelevant content and spam, allowing Google to produce high-quality content and rely on them to be 'trusted' authors.

In October 2019, Google announced they would start applying BERT models for English language search queries in the US. Bidirectional Encoder Representations from Transformers (BERT) was another attempt by Google to improve their natural language processing, but this time in order to better understand the search queries of their users.[33] In terms of search engine optimization, BERT intended to connect users more easily to relevant content and increase the quality of traffic coming to websites that are ranking in the Search Engine Results Page.

The leading search engines, such as Google, Bing, and Yahoo!, use crawlers to find pages for their algorithmic search results. Pages that are linked from other search engine-indexed pages do not need to be submitted because they are found automatically. The Yahoo! Directory and DMOZ, two major directories which closed in 2014 and 2017 respectively, both required manual submission and human editorial review.[34] Google offers Google Search Console, for which an XML Sitemap feed can be created and submitted for free to ensure that all pages are found, especially pages that are not discoverable by automatically following links[35] in addition to their URL submission console.[36] Yahoo! formerly operated a paid submission service that guaranteed to crawl for a cost per click;[37] however, this practice was discontinued in 2009.

Search engine crawlers may look at a number of different factors when crawling a site. Not every page is indexed by search engines. The distance of pages from the root directory of a site may also be a factor in whether or not pages get crawled.[38]

Mobile devices are used for the majority of Google searches.[39] In November 2016, Google announced a major change to the way they are crawling websites and started to make their index mobile-first, which means the mobile version of a given website becomes the starting point for what Google includes in their index.[40] In May 2019, Google updated the rendering engine of their crawler to be the latest version of Chromium (74 at the time of the announcement). Google indicated that they would regularly update the Chromium rendering engine to the latest version.[41] In December 2019, Google began updating the User-Agent string of their crawler to reflect the latest Chrome version used by their rendering service. The delay was to allow webmasters time to update their code that responded to particular bot User-Agent strings. Google ran evaluations and felt confident the impact would be minor.[42]

To avoid undesirable content in the search indexes, webmasters can instruct spiders not to crawl certain files or directories through the standard robots.txt file in the root directory of the domain. Additionally, a page can be explicitly excluded from a search engine's database by using a meta tag specific to robots (usually <meta name="robots" content="noindex"> ). When a search engine visits a site, the robots.txt located in the root directory is the first file crawled. The robots.txt file is then parsed and will instruct the robot as to which pages are not to be crawled. As a search engine crawler may keep a cached copy of this file, it may on occasion crawl pages a webmaster does not wish to crawl. Pages typically prevented from being crawled include login-specific pages such as shopping carts and user-specific content such as search results from internal searches. In March 2007, Google warned webmasters that they should prevent indexing of internal search results because those pages are considered search spam.[43]

In 2020, Google sunsetted the standard (and open-sourced their code) and now treats it as a hint rather than a directive. To adequately ensure that pages are not indexed, a page-level robot's meta tag should be included.[44]

A variety of methods can increase the prominence of a webpage within the search results. Cross linking between pages of the same website to provide more links to important pages may improve its visibility. Page design makes users trust a site and want to stay once they find it. When people bounce off a site, it counts against the site and affects its credibility.[45]

Writing content that includes frequently searched keyword phrases so as to be relevant to a wide variety of search queries will tend to increase traffic. Updating content so as to keep search engines crawling back frequently can give additional weight to a site. Adding relevant keywords to a web page's metadata, including the title tag and meta description, will tend to improve the relevancy of a site's search listings, thus increasing traffic. URL canonicalization of web pages accessible via multiple URLs, using the canonical link element[46] or via 301 redirects can help make sure links to different versions of the URL all count towards the page's link popularity score. These are known as incoming links, which point to the URL and can count towards the page link's popularity score, impacting the credibility of a website.[45]

SEO techniques can be classified into two broad categories: techniques that search engine companies recommend as part of good design ("white hat"), and those techniques of which search engines do not approve ("black hat"). Search engines attempt to minimize the effect of the latter, among them spamdexing. Industry commentators have classified these methods and the practitioners who employ them as either white hat SEO or black hat SEO.[47] White hats tend to produce results that last a long time, whereas black hats anticipate that their sites may eventually be banned either temporarily or permanently once the search engines discover what they are doing.[48]

An SEO technique is considered a white hat if it conforms to the search engines' guidelines and involves no deception. As the search engine guidelines[11][12][49] are not written as a series of rules or commandments, this is an important distinction to note. White hat SEO is not just about following guidelines but is about ensuring that the content a search engine indexes and subsequently ranks is the same content a user will see. White hat advice is generally summed up as creating content for users, not for search engines, and then making that content easily accessible to the online "spider" algorithms, rather than attempting to trick the algorithm from its intended purpose. White hat SEO is in many ways similar to web development that promotes accessibility,[50] although the two are not identical.

Black hat SEO attempts to improve rankings in ways that are disapproved of by the search engines or involve deception. One black hat technique uses hidden text, either as text colored similar to the background, in an invisible div, or positioned off-screen. Another method gives a different page depending on whether the page is being requested by a human visitor or a search engine, a technique known as cloaking. Another category sometimes used is grey hat SEO. This is in between the black hat and white hat approaches, where the methods employed avoid the site being penalized but do not act in producing the best content for users. Grey hat SEO is entirely focused on improving search engine rankings.

Search engines may penalize sites they discover using black or grey hat methods, either by reducing their rankings or eliminating their listings from their databases altogether. Such penalties can be applied either automatically by the search engines' algorithms or by a manual site review. One example was the February 2006 Google removal of both BMW Germany and Ricoh Germany for the use of deceptive practices.[51] Both companies subsequently apologized, fixed the offending pages, and were restored to Google's search engine results page.[52]

Companies that employ black hat techniques or other spammy tactics can get their client websites banned from the search results. In 2005, the Wall Street Journal reported on a company, Traffic Power, which allegedly used high-risk techniques and failed to disclose those risks to its clients.[53] Wired magazine reported that the same company sued blogger and SEO Aaron Wall for writing about the ban.[54] Google's Matt Cutts later confirmed that Google had banned Traffic Power and some of its clients.[55]

SEO is not an appropriate strategy for every website, and other Internet marketing strategies can be more effective, such as paid advertising through pay-per-click (PPC) campaigns, depending on the site operator's goals.[editorializing] Search engine marketing (SEM) is the practice of designing, running, and optimizing search engine ad campaigns. Its difference from SEO is most simply depicted as the difference between paid and unpaid priority ranking in search results. SEM focuses on prominence more so than relevance; website developers should regard SEM with the utmost importance with consideration to visibility as most navigate to the primary listings of their search.[56] A successful Internet marketing campaign may also depend upon building high-quality web pages to engage and persuade internet users, setting up analytics programs to enable site owners to measure results, and improving a site's conversion rate.[57][58] In November 2015, Google released a full 160-page version of its Search Quality Rating Guidelines to the public,[59] which revealed a shift in their focus towards "usefulness" and mobile local search. In recent years the mobile market has exploded, overtaking the use of desktops, as shown in by StatCounter in October 2016, where they analyzed 2.5 million websites and found that 51.3% of the pages were loaded by a mobile device.[60] Google has been one of the companies that are utilizing the popularity of mobile usage by encouraging websites to use their Google Search Console, the Mobile-Friendly Test, which allows companies to measure up their website to the search engine results and determine how user-friendly their websites are. The closer the keywords are together their ranking will improve based on key terms.[45]

SEO may generate an adequate return on investment. However, search engines are not paid for organic search traffic, their algorithms change, and there are no guarantees of continued referrals. Due to this lack of guarantee and uncertainty, a business that relies heavily on search engine traffic can suffer major losses if the search engines stop sending visitors.[61] Search engines can change their algorithms, impacting a website's search engine ranking, possibly resulting in a serious loss of traffic. According to Google's CEO, Eric Schmidt, in 2010, Google made over 500 algorithm changes – almost 1.5 per day.[62] It is considered a wise business practice for website operators to liberate themselves from dependence on search engine traffic.[63] In addition to accessibility in terms of web crawlers (addressed above), user web accessibility has become increasingly important for SEO.

Optimization techniques are highly tuned to the dominant search engines in the target market. The search engines' market shares vary from market to market, as does competition. In 2003, Danny Sullivan stated that Google represented about 75% of all searches.[64] In markets outside the United States, Google's share is often larger, and data showed Google was the dominant search engine worldwide as of 2007.[65] As of 2006, Google had an 85–90% market share in Germany.[66] While there were hundreds of SEO firms in the US at that time, there were only about five in Germany.[66] As of March 2024, Google still had a significant market share of 89.85% in Germany.[67] As of June 2008, the market share of Google in the UK was close to 90% according to Hitwise.[68][obsolete source] As of March 2024, Google's market share in the UK was 93.61%.[69]

Successful search engine optimization (SEO) for international markets requires more than just translating web pages. It may also involve registering a domain name with a country-code top-level domain (ccTLD) or a relevant top-level domain (TLD) for the target market, choosing web hosting with a local IP address or server, and using a Content Delivery Network (CDN) to improve website speed and performance globally. It is also important to understand the local culture so that the content feels relevant to the audience. This includes conducting keyword research for each market, using hreflang tags to target the right languages, and building local backlinks. However, the core SEO principles—such as creating high-quality content, improving user experience, and building links—remain the same, regardless of language or region.[66]

Regional search engines have a strong presence in specific markets:

By the early 2000s, businesses recognized that the web and search engines could help them reach global audiences. As a result, the need for multilingual SEO emerged.[74] In the early years of international SEO development, simple translation was seen as sufficient. However, over time, it became clear that localization and transcreation—adapting content to local language, culture, and emotional resonance—were far more effective than basic translation.[75]

On October 17, 2002, SearchKing filed suit in the United States District Court, Western District of Oklahoma, against the search engine Google. SearchKing's claim was that Google's tactics to prevent spamdexing constituted a tortious interference with contractual relations. On May 27, 2003, the court granted Google's motion to dismiss the complaint because SearchKing "failed to state a claim upon which relief may be granted."[76][77]

In March 2006, KinderStart filed a lawsuit against Google over search engine rankings. KinderStart's website was removed from Google's index prior to the lawsuit, and the amount of traffic to the site dropped by 70%. On March 16, 2007, the United States District Court for the Northern District of California (San Jose Division) dismissed KinderStart's complaint without leave to amend and partially granted Google's motion for Rule 11 sanctions against KinderStart's attorney, requiring him to pay part of Google's legal expenses.[78][79]

| Part of a series on |

| Internet marketing |

|---|

| Search engine marketing |

| Display advertising |

| Affiliate marketing |

| Mobile advertising |

Local search engine optimization (local SEO) is similar to (national) SEO in that it is also a process affecting the visibility of a website or a web page in a web search engine's unpaid results (known as its SERP, search engine results page) often referred to as "natural", "organic", or "earned" results.[1] In general, the higher ranked on the search results page and more frequently a site appears in the search results list, the more visitors it will receive from the search engine's users; these visitors can then be converted into customers.[2] Local SEO, however, differs in that it is focused on optimizing a business's online presence so that its web pages will be displayed by search engines when users enter local searches for its products or services.[3] Ranking for local search involves a similar process to general SEO but includes some specific elements to rank a business for local search.

For example, local SEO is all about 'optimizing' your online presence to attract more business from relevant local searches. The majority of these searches take place on Google, Yahoo, Bing, Yandex, Baidu and other search engines but for better optimization in your local area you should also use sites like Yelp, Angie's List, LinkedIn, Local business directories, social media channels and others.[4]

The origin of local SEO can be traced back[5] to 2003-2005 when search engines tried to provide people with results in their vicinity as well as additional information such as opening times of a store, listings in maps, etc.

Local SEO has evolved over the years to provide a targeted online marketing approach that allows local businesses to appear based on a range of local search signals, providing a distinct difference from broader organic SEO which prioritises relevance of search over a distance of searcher.

Local searches trigger search engines to display two types of results on the Search engine results page: local organic results and the 'Local Pack'.[3] The local organic results include web pages related to the search query with local relevance. These often include directories such as Yelp, Yellow Pages, Facebook, etc.[3] The Local Pack displays businesses that have signed up with Google and taken ownership of their 'Google My Business' (GMB) listing.

The information displayed in the GMB listing and hence in the Local Pack can come from different sources:[6]

Depending on the searches, Google can show relevant local results in Google Maps or Search. This is true on both mobile and desktop devices.[7]

Google has added a new Q&A features to Google Maps allowing users to submit questions to owners and allowing these to respond.[8] This Q&A feature is tied to the associated Google My Business account.

Google Business Profile (GBP), formerly Google My Business (GMB) is a free tool that allows businesses to create and manage their Google Business listing. These listings must represent a physical location that a customer can visit. A Google Business listing appears when customers search for businesses either on Google Maps or in Google SERPs. The accuracy of these listings is a local ranking factor.

Major search engines have algorithms that determine which local businesses rank in local search. Primary factors that impact a local business's chance of appearing in local search include proper categorization in business directories, a business's name, address, and phone number (NAP) being crawlable on the website, and citations (mentions of the local business on other relevant websites like a chamber of commerce website).[9]

In 2016, a study using statistical analysis assessed how and why businesses ranked in the Local Packs and identified positive correlations between local rankings and 100+ ranking factors.[10] Although the study cannot replicate Google's algorithm, it did deliver several interesting findings:

Prominence, relevance, and distance are the three main criteria Google claims to use in its algorithms to show results that best match a user's query.[12]

According to a group of local SEO experts who took part in a survey, links and reviews are more important than ever to rank locally.[13]

As a result of both Google as well as Apple offering "near me" as an option to users, some authors[14] report on how Google Trends shows very significant increases in "near me" queries. The same authors also report that the factors correlating the most with Local Pack ranking for "near me" queries include the presence of the "searched city and state in backlinks' anchor text" as well as the use of the " 'near me' in internal link anchor text"

An important update to Google's local algorithm, rolled out on the 1st of September 2016.[15] Summary of the update on local search results:

As previously explained (see above), the Possum update led similar listings, within the same building, or even located on the same street, to get filtered. As a result, only one listing "with greater organic ranking and stronger relevance to the keyword" would be shown.[16] After the Hawk update on 22 August 2017, this filtering seems to apply only to listings located within the same building or close by (e.g. 50 feet), but not to listings located further away (e.g.325 feet away).[16]

As previously explained (see above), reviews are deemed to be an important ranking factor. Joy Hawkins, a Google Top Contributor and local SEO expert, highlights the problems due to fake reviews:[17]

To find the best SEO company in Sydney, look for a provider with a proven track record of success, transparent reporting, and a clear understanding of your business�s goals. Check reviews, case studies, and client testimonials to ensure you are choosing a reputable partner.

SEO agencies in Sydney typically offer comprehensive services such as keyword research, technical audits, on-page and off-page optimization, content creation, and performance tracking. Their goal is to increase your site's search engine rankings and drive more targeted traffic to your website.

Keyword research helps identify the terms and phrases that potential customers are using to search for products or services. By targeting these keywords in your content, you can improve your visibility in search engine results, attract more qualified leads, and drive higher conversion rates.